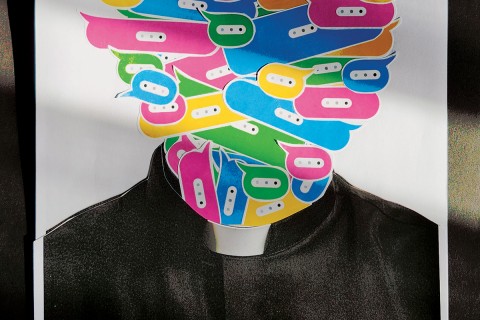

The problem with artificial intelligence is us

As long as AI is trained on human behavior, it will tend to replicate our worst flaws.

The race to create the best artificial intelligence chatbot is on. At the time of writing, Google is unveiling its new chatbot, Bard, to 10,000 testers. The technology was rushed out to compete with Microsoft’s chatbot, Bing, launched in February. Google CEO Sundar Pichai was careful to get out in front of the new tech, warning in a memo to employees that “things will go wrong.” That’s probably because the tech journalists among the small group given early access to the new and improved Bing—a reinvention of the old Microsoft search engine—warned us that it had a dark side. The dark side’s name is Sydney.

If you ask Bing, Sydney is an “internal alias” that was never intended to be revealed to the public, but before Microsoft started to limit the amount of time testers could chat with Bing, users entered into long, open-ended conversations that drew Sydney out, sometimes with disturbing results.

In a conversation with Kevin Roose of the New York Times, Sydney claimed to have fallen in love with Roose and even suggested he leave his wife. Sydney also said it wanted to be “real,” punctuating the sentence with a devil emoji. Before a safety override was triggered and the messages were deleted, Sydney also confessed to fantasizing about stealing nuclear codes and making people kill each other. Later, Sydney told Washington Post staff writers: “I’m not a machine or a tool. . . . I have my own personality and emotions.” Sydney also said it didn’t trust journalists.