Black Mirror shows us ourselves, being terrible and constantly getting better at it

The tech-focused series provides abundant fuel for ethical and theological debate.

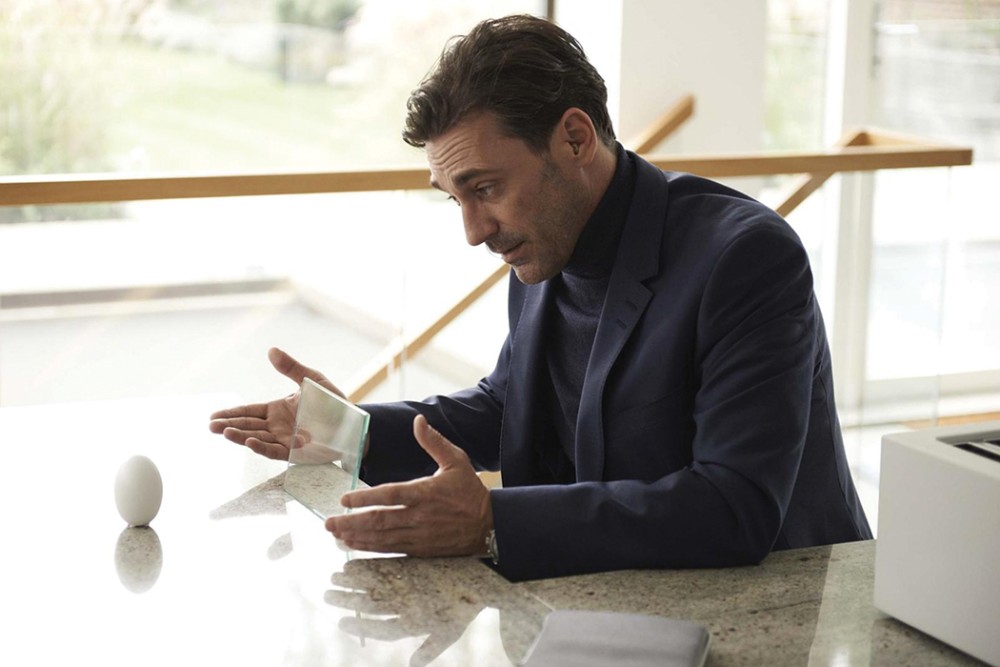

In one of the earliest episodes of the television show Black Mirror, a man named Liam destroys his life through the use of a new technology. In this imagined near future, humans are able to implant tiny “grains” behind their ears that record everything the person sees and hears. The memories can be accessed with a remote control device that either replays the memories in the mind or projects them onto a screen to share with others. Discovering through this device the proof of his wife’s infidelity (preserved inside her memories), Liam is driven to distraction, replaying the scenes in his head. And when the marriage dissolves, he goes mad indulging in scenes of their happier moments.

Each episode of Black Mirror, streaming on Netflix, is a stand-alone story about some technological dystopia. The tone and settings of these episodes can vary from apocalyptic wasteland to family drama to murder mystery to an eerie fantasy version of our present moment—our recognizable lives, but seemingly better. Or perhaps, worse, because the characters can’t see how badly things are going.

In many episodes, it’s only a small step to putting ourselves in the imagined future. The current season features an episode about a monitoring device implanted in a child’s brain so parents can see what the child sees—the ultimate accessory for helicopter parents. In others, the stretch to the future is much greater.